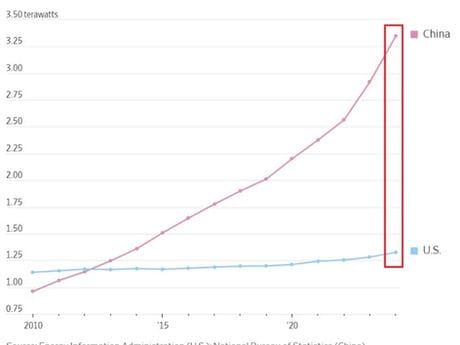

China's Dominance in Power Generation Underpins Its Edge in the AI Race

China's electricity generation capacity of 3,010 GW — including 521 GW of renewables added in 2023 alone — gives it a structural cost advantage in AI infrastructure that cannot be replicated by Western competitors on any credible timeline.

The conversation about China's AI capabilities focuses almost exclusively on models, chips, and data. It consistently underweights the factor that will ultimately determine who can scale AI infrastructure fastest: electricity. China's installed power generation capacity of 3,010 GW is not a coincidence. It is the product of two decades of deliberate industrial policy, and it gives Chinese AI infrastructure a structural cost advantage that no amount of Western capital can quickly close.

The Scale of China's Energy Advantage

In 2023, China installed 521 GW of renewable energy capacity — more than the rest of the world combined, and more than the entire installed capacity of France and Germany together. Total electricity generation from renewables reached 3,033 TWh, approximately equal to the entire electricity consumption of India. Average industrial electricity prices in China's interior provinces run at $0.04-0.06 per kWh — roughly one-third of comparable US industrial rates and one-fifth of European rates. For a large-scale AI training cluster consuming 100MW continuously, this price differential represents hundreds of millions of dollars per year in operational cost savings.

The Data Centre Buildout

China's government has designated Inner Mongolia, Guizhou, and Ningxia as national data centre hubs, directing cloud providers to locate compute infrastructure in these low-cost, renewable-energy-rich inland regions. Alibaba Cloud, Tencent Cloud, Huawei Cloud, and ByteDance have all made substantial commitments. The resulting compute cost per AI inference is structurally lower than equivalent US or European infrastructure, creating a durable advantage in serving price-sensitive AI application markets — the mass market, not the premium enterprise segment.

The Inference Arbitrage

Training large frontier models requires enormous compute bursts. Inference — running a trained model to serve user queries — requires continuous, lower-intensity compute at massive scale. The economics of inference heavily favour low electricity cost. China's energy advantage becomes more valuable as AI transitions from training-centric to inference-centric infrastructure — a transition that is well underway. The $0.02 per million token pricing that Chinese AI providers offer (versus $0.15+ from US competitors) is partly a competitive strategy and partly a reflection of genuine structural cost advantage.

Investment Implications

For Hong Kong-listed investors, the relevant plays are: Chinese cloud infrastructure operators (Alibaba Cloud, Tencent Cloud, Kingsoft Cloud) that benefit from low-cost energy in their inference economics; Chinese renewable energy developers (China Yangtze Power, Longi Green Energy) that supply the power enabling this buildout; and Chinese AI application companies whose unit economics are structurally superior to US counterparts due to energy cost differences. The risk: US export controls that limit Chinese access to frontier chips constrain the training side of the equation regardless of energy cost advantages. The inference opportunity, built on already-trained models, is less exposed to this constraint.